AI — RAG — Domain Adaptation

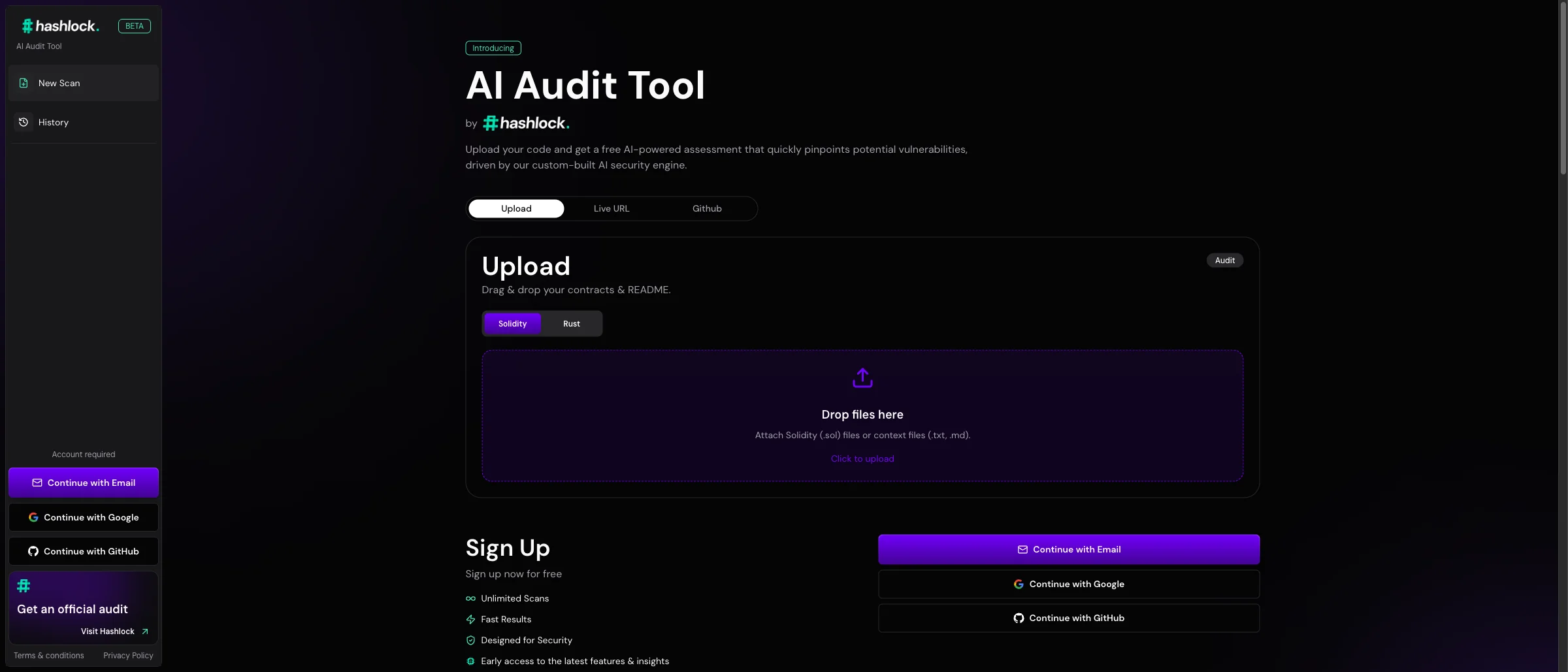

Hashlock AI Auditor

RAG over a curated vulnerability knowledge base — specialising a general foundation model for a narrow, high-stakes domain (Solidity, Rust, Vyper auditing).

Read case studyGeneral-purpose AI models are remarkable, but they don’t know your terminology, your data, or your business rules.

Engagement

AI Solutions

Typical Duration

4 – 10 weeks

Focus & Stack

We close the gap between general AI and your specific needs. Sometimes that means fine-tuning a model. Often it means smarter prompt engineering, retrieval design, or structured outputs. We use the lightest technique that hits the quality target, and only invest in heavier approaches when simpler ones fall short.

Careful system prompt design with domain context, terminology guides, output specs, few-shot examples. Often resolves the issue without touching the model.

Representative examples in the prompt. Dynamic selection of the most relevant examples for each input.

Terminology glossaries, style guides, business rules retrieved and included at query time. Change the documents, change the behaviour.

JSON schemas, enum values, template-based text. Constraining the output space eliminates many domain adaptation issues.

Training on domain-specific examples. Appropriate for high-volume, highly specific tasks where prompt length gets costly or behaviour is hard to describe but easy to demonstrate.

Task-specific models for classification, entity recognition, or specialised tasks where a dedicated model outperforms a general LLM.

What does "good enough for production" look like?

What does "good enough for production" look like?

Input-output pairs with domain experts, including edge cases.

Input-output pairs with domain experts, including edge cases.

Begin with prompt engineering, escalate only if metrics require it.

Begin with prompt engineering, escalate only if metrics require it.

Every change validated. Improvement must be statistically significant.

Every change validated. Improvement must be statistically significant.

Ensure adaptations don’t degrade performance on related tasks.

Ensure adaptations don’t degrade performance on related tasks.

Deliverables

We’ve seen teams spend months fine-tuning when better prompting would have solved it in days. And vice versa. We help you make the right call, then execute it properly.